Hi there,

i'm having a strange issue while rendering my scenes over the internal network. I have a scene configured with pathtracing, just 100k of polygons with a small camera animation running with 25fps for 100 frames. My local machine has a RTX 3090, the render node has 2 RTX 3090. Both running Windows 10. When using the Live Viewer with network rendering enabled, the remote GPUs are being used for computing. GPU-Z also confirms this with showing the GPU utilization.

But when rendering the scene via the Picture Manager in C4D R.20 (PT, 1000 Samples) i can see that the scene is being transfered over the network to the render node but the GPU utilization is 0. Also the Picture Manager doesn't indicate that the remote node is rendering (octane info is just showing: updating).

I know that it could be a timing issue in theory - so the remote node is unable to send it's update to the host, because the host has finished rendering faster. But even if i crank the samples up to 4000, it doen't make any difference. After some Frames it just freezes and doesn't render any further. If i then quit the render process, i'll get the following error message on the render node:

Launching net render node (10021700) with master xxx.xxx.xxx.xxx:1025

CUDA error 201 on device 0: invalid device context

-> could not get memory info

CUDA error 201 on device 0: invalid device context

-> could not get memory info

CUDA error 201 on device 0: invalid device context

-> could not get memory info

device 0: CUDA module wasn't unloaded!

device 0: CUDA context wasn't destroyed!

CUDA error 201 on device 1: invalid device context

-> could not get memory info

CUDA error 201 on device 1: invalid device context

-> could not get memory info

CUDA error 201 on device 1: invalid device context

-> could not get memory info

device 1: CUDA module wasn't unloaded!

device 1: CUDA context wasn't destroyed!

Local rendering does work without any issues.

I'm Using:

- C4D R.20

- Octane 2020.2.5 - R3

- Nvidia Studio Driver 472.12

Kind Regards

René

Network Render Node does not render.

Forum rules

Please add your OS and Hardware Configuration in your signature, it makes it easier for us to help you analyze problems. Example: Win 7 64 | Geforce GTX680 | i7 3770 | 16GB

Please add your OS and Hardware Configuration in your signature, it makes it easier for us to help you analyze problems. Example: Win 7 64 | Geforce GTX680 | i7 3770 | 16GB

6 posts

• Page 1 of 1

Re: Network Render Node does not render.

Hi,

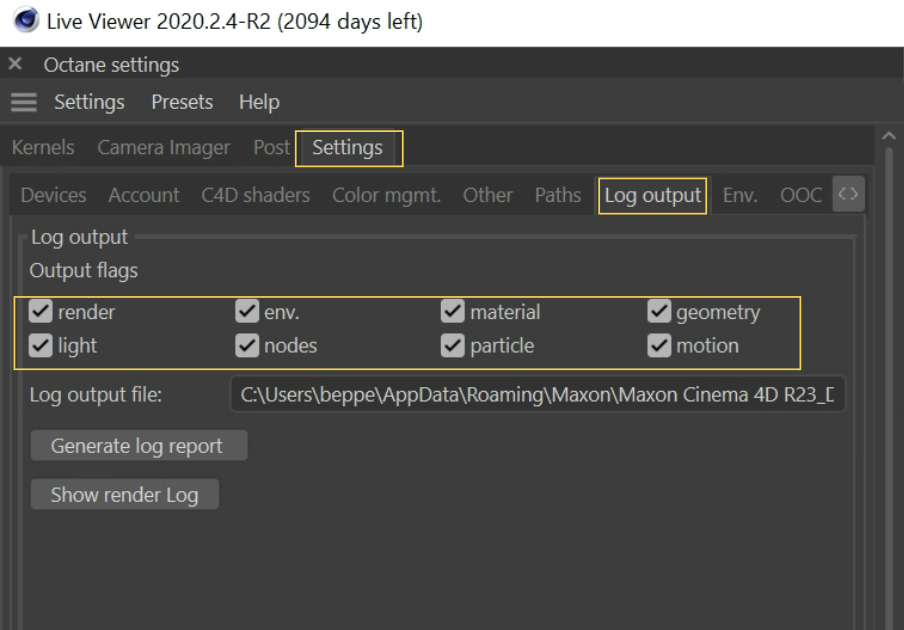

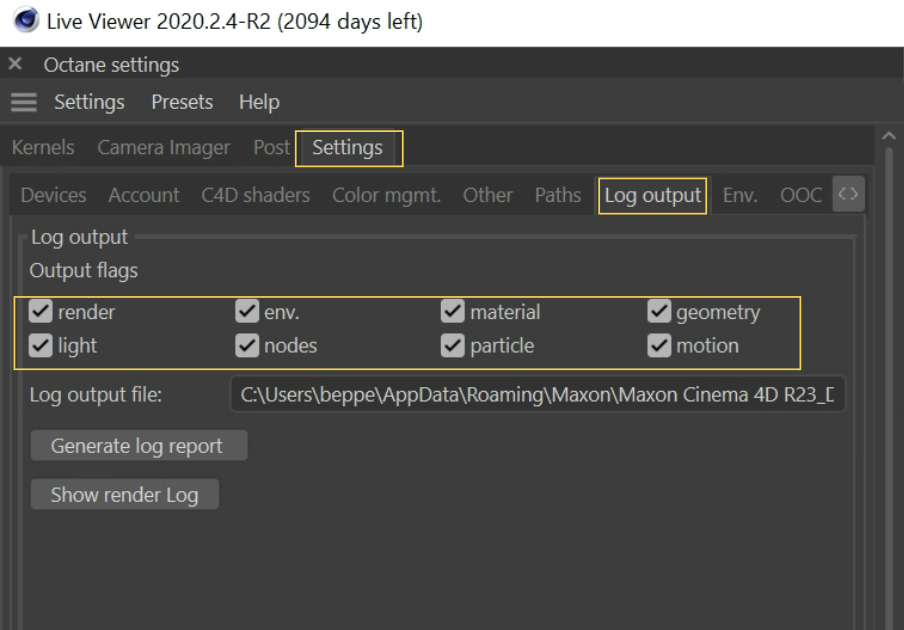

please, open the scene, then go to c4doctane Settings/Log Output tab, and enable all the Log option checkboxes.

Then, with Net render enabled, load the scene in Live View, then in Picture Viewer, until Render-Node crashes, then reopen c4d, navigate to c4doctane Settings/Log Output tab, press the Generate log report button, and share the resulting octanelog_report.zip file, thanks.

ciao Beppe

please, open the scene, then go to c4doctane Settings/Log Output tab, and enable all the Log option checkboxes.

Then, with Net render enabled, load the scene in Live View, then in Picture Viewer, until Render-Node crashes, then reopen c4d, navigate to c4doctane Settings/Log Output tab, press the Generate log report button, and share the resulting octanelog_report.zip file, thanks.

ciao Beppe

-

bepeg4d - Octane Guru

- Posts: 9956

- Joined: Wed Jun 02, 2010 6:02 am

- Location: Italy

Re: Network Render Node does not render.

Hello,

we changed the GPUs in our workstations from 2080Ti to 3090 and now we have the same problem.

Did you find a solution?

Best regards,

Denis

we changed the GPUs in our workstations from 2080Ti to 3090 and now we have the same problem.

Did you find a solution?

Best regards,

Denis

- dasmodular

- Licensed Customer

- Posts: 30

- Joined: Tue Apr 17, 2018 10:59 am

Re: Network Render Node does not render.

Hello,

we got it running!

We did a clean install of Octane Render with CCleaner and a clean install of the GPU driver with DDU, disabled fast boot in BIOS and Windows Smart Start, but in the end an optional Windows update fixed the issue (21H2 KB5016688).

Best regards,

Denis

we got it running!

We did a clean install of Octane Render with CCleaner and a clean install of the GPU driver with DDU, disabled fast boot in BIOS and Windows Smart Start, but in the end an optional Windows update fixed the issue (21H2 KB5016688).

Best regards,

Denis

- dasmodular

- Licensed Customer

- Posts: 30

- Joined: Tue Apr 17, 2018 10:59 am

Re: Network Render Node does not render.

Actually we found out it was the rtx acceleration causing this issue. Turning RTX off was the solution. All cards we use are RTX...

Kind regards,

Denis

Kind regards,

Denis

- dasmodular

- Licensed Customer

- Posts: 30

- Joined: Tue Apr 17, 2018 10:59 am

Re: Network Render Node does not render.

Hi Denis,

difficult to say without a log... which exact version of Render-Node you are using?

Have you tried with latest 2022.1 Studio+ version?

Here is the link to Standalone 2022.1:

https://render.otoy.com/customerdownloa ... _1_win.exe

Here is the link to c4doctane 2022.1-R2:

https://render.otoy.com/customerdownloa ... R2_win.rar

And the link to new Render Node version 2022.1:

https://render.otoy.com/customerdownloa ... de_win.exe

ciao,

Beppe

difficult to say without a log... which exact version of Render-Node you are using?

Have you tried with latest 2022.1 Studio+ version?

Here is the link to Standalone 2022.1:

https://render.otoy.com/customerdownloa ... _1_win.exe

Here is the link to c4doctane 2022.1-R2:

https://render.otoy.com/customerdownloa ... R2_win.rar

And the link to new Render Node version 2022.1:

https://render.otoy.com/customerdownloa ... de_win.exe

ciao,

Beppe

-

bepeg4d - Octane Guru

- Posts: 9956

- Joined: Wed Jun 02, 2010 6:02 am

- Location: Italy

6 posts

• Page 1 of 1

Who is online

Users browsing this forum: Google [Bot], Majestic-12 [Bot] and 18 guests

Tue Apr 23, 2024 1:35 pm [ UTC ]